(Originally posted 2013-02-23.)

When I first heard of Flash Express as part of the zEC12 announcement – some time before announcement – I thought of one use case above all, and one of particularly poignant resonance with some of my readers: Dump capture amelioration.

Then, in the marketing materials, I heard of others. And the discussions have grown more numerous recently. So it’s time I expressed (pardon the pun) my opinion.

The two cases I hear most often are:

- Down In The Dumps

- Market Open

But there is a third:

- Close To The Edge

These names are, of course, glib. The actual scenarios themselves are fuzzy in a good way: Customers will express their needs individually but encompassing the main theme.

So let me talk about each one.

Down In The Dumps

For many customers it’s imperative that dumps – especially of major address spaces – complete quickly. Particularly the dump capture portion.

As you probably know, when an address space is dumped the system halts work while the dump’s capture phase begins. The capture phase writes to dataspace, which is ideally backed by real memory. There are a number of things that can go wrong with this, in the worst case leading to tens of minutes of dump capture and perhaps hours of service recovery (possibly involving a sysplex-wide restart).

(If you’ve been through this you really don’t need me to labour the point. If you haven’t then please still take note.)

In the worst case the dump doesn’t get captured. Which means diagnostics to explain the need for the dump and potentially a resolution won’t be (fully) available.

Market Open

I always think it’s useful to draw a timeline – whether on paper or just in your head. If you consider a 24 hour period the memory usage can be very different, say, overnight from the online day.

- Overnight batch users, such as sorts, compete very effectively for real memory page frames: It’s entirely possible online address spaces, such as CICS regions, can lose their pages to paging disk.

- At the start of day (classically when the markets open, though that’s a financial services term) online services roar into life. In fact many applications experience a spike in demand, which then settles down.

Coping with “market open” is about the time to recover pages to memory (and the time to furnish new pages where the online application needs to grow its own memory footprint).

Close To The Edge

While I see many customer systems with lots of spare memory – particularly on z196 and zEC12 machines, there are cases where memory is less plentiful.

I wouldn’t advocate letting a system page as a day-to-day occurrence. Equally it’s often beneficial to consider the value of using idle memory, say for bigger DB2 buffer pool.

But a fair number of systems achieve stasis without a large amount of free memory. As workloads grow, or even where there are unusually large fluctuations in usage, this happy medium can become compromised.

What They All Have In Common

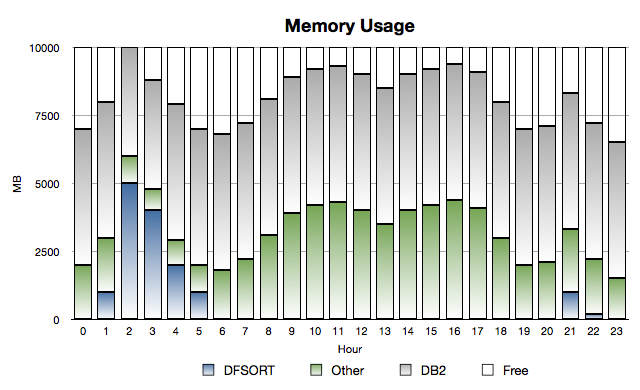

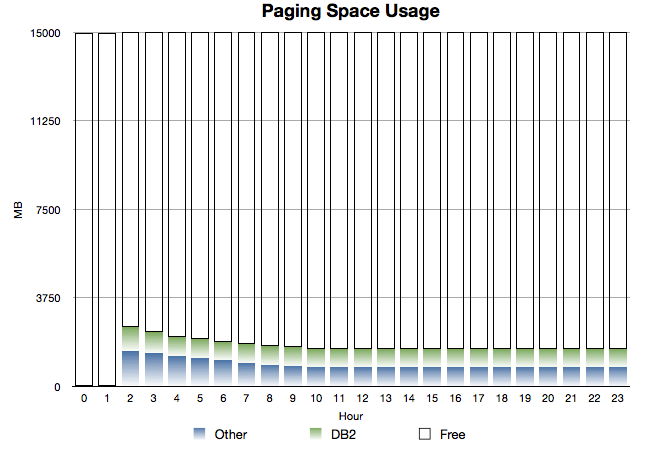

Consider the following two graphs:

and

These are timeline graphs (as I just advocated).

While this is a situation where DFSORT spikes in usage early in the morning, it could (with different names and timing) be a case where a large address space suddenly has to be dumped.

The salient features are:

- Normally there’s some of the 10GB of memory free, but not an enormous amount. (And you see the classical “double hump” usage profile.)

- The category called “Other” is everything in the system apart from DB2 and DFSORT. So System, CICS regions, TSO, Daytime Batch etc.

- DB2 usage is relatively static – which is generally true.

- In the early hours of the morning DFSORT batch jobs come in and grab a large proportion of storage. Under some circumstances they can, as here, push other pages to page data sets. Particularly poor competitors include CICS regions and less-used (in the night) DB2 buffer pools.

- It takes a while for DB2 and Other to recover the pages they need.

- Some pages remain on page data sets all the time.

This would be a mixture of two things:

- Pages that aren’t referenced again.

- Pages that are referenced again but the page data set slots aren’t freed up. (Recall that when a page is stolen from memory if it’s unchanged we don’t write it out –

- if there’s a copy in page data set slot – so there’s benefit in not freeing up the slot for a paged-in page.)

As I said, this scenario is common across all three. And applying a timeline to what we naturally think of as a “point in time” picture really helps in this situation.

Flash To The Rescue?

First, it’s not as if IBM Product Development hasn’t already done a lot of work in managing memory and dumping better in recent releases: It certainly has.

But there’s always room for improvement. And Flash Express certainly is a major part of this.

Reading a page from Flash (and indeed writing one to Flash) is considerably faster than disk. (And, though this might seem like a restatement of the previous sentence, the bandwidth is much higher than for disk.)

Of course the page transfer time isn’t zero and the bandwidth isn’t infinite with Flash – but it’s very high. I labour this point because I don’t want you to think this is just a cheaper way of buying the equivalent of real memory. Generally it is cheaper but it’s not the same stuff.

All the three scenarios I’ve described work much better with Flash than with paging to disk. They would work much better with additional real memory than paging to disk, but the economics typically would be worse.

The “Close To The Edge” scenario is worth commenting on specifically:

Although it’s possible to have DB2 buffer pools, for example, page to Flash (including 1MB page ones) this is not something you should aim to do steady state. My view is you should back virtual storage users with real memory, as a strong preference: retrieving pages from Flash will take time and CPU cycles.

In the “DFSORT steals online and DB2’s pages” scenario there is a technical detail I think you need to know:

DFSORT uses the STGTEST SYSEVENT to establish how many free pages there are – so it could use them in a responsible way for sort work. (The majority of problems with DFSORT and memory management are where multiple sorts come in at once or where something else grabs the storage at the same time.) It’s important to note that STGTEST SYSEVENT does not regard unused Flash Express pages as free.

So, while DFSORT might chase other address spaces into Flash, it shouldn’t follow them there. I think that’s significant – and that’s why I checked on STGTEST SYSEVENT with z/OS Development.

I can see a lot of scope for people to get strident: “Thou Must Not Page To Flash”. I actually see this as more nuanced than that. Certainly the damage in paging to Flash is much less.

So, in short, I see Flash Express as a very useful safety valve.

For further reading, take a look at zFlash Introduction Uses and Benefits.

One thought on “Flash Saviour Of The Universe?”