It is rare to need to evacuate hardware. But being rare is not an excuse to neglect to plan for it.

Obviously I’ve been sensitised to the question by real customer situations – though I don’t intend to describe the situations in any detail; This post should prove useful enough without doing that.

When I say “rare” hardware generation upgrades usually require giving the machine over. So I suppose one could make a distinction between such things and repair actions and emergencies.

Perhaps more subtly, concurrent repair and upgrade actions are possible. With these you don’t hand the entire machine over. In fact it’s a valid question whether to or not.

So let’s discuss two options:

- Evacuating a whole machine

- Evacuating a processor drawer

(Obviously for a single drawer machine the two are the same. I tend to advocate customers buy machines with at least two drawers.)

Evacuating A Whole Machine

Evacuating a whole machine means taking down all of the LPARs of whatever description on the machine. Some of those LPARs might be running specific workloads, but others might be integral to the whole enterprise, for example coupling facility LPARs with unduplexed structures in or LPARs with DVIPAs in.

The former lead to homes being needed for the work they would’ve run, or for that work to be foregone. The latter need the vital components moved – whether explicitly or automatically.

Most customers I work with have more than one machine for a given workload; Obviously if you don’t the situation is more serious.

Evacuating A Processor Drawer

I won’t go into the mechanics of how to evacuate a drawer; In fact I don’t know how it’s done. (To state the obvious, I’m not a hardware planner nor a customer engineer. I try to nod wisely, though. 😊)

It should be noted that a drawer might need to be pulled out for reasons other than repairs. The prime example I can think of is adding memory cards. Though not all increases in purchased memory require additional hardware, some do. The memory cards have to be accessed from the top of the drawer so it would need to be pulled out – and would need to be deactivated.

PR/SM allocates IFLs and ICFs from the top drawer down. It allocates GCPs and zIIPs from the bottom drawer upwards. (Nomenclature for drawers varies – even within IBM infrastructure – so it might be best to refer to them by their location in the frames, but that’s cumbersome.)

When a drawer is removed any characterised processors would have to be relocated (if possible). Likewise any memory (if possible). It should be expected that PR/SM will rework physical resources as well as LPAR allocations. (Lots of other things cause similar rework – but that’s beyond the scope of this post.)

A well planned configuration will have paths from each processor drawer to each I/O device – though not necessarily directly to each I/O drawer. While the standard planning tools strive to achieve redundant connectivity it is worth checking. (Again not my domain, if you’ll pardon the pun.)

While individual z17 DPUs (Data Processing Units) own specific channels, the loss of a DPU (actually most likely its PU chip) does lead to reworking. Perhaps one day I’ll write about SMF 73 Channel Path information and DPUs.

When I talk about connectivity and processor drawers similar things could be said about I/O drawers.

Capacity Planning

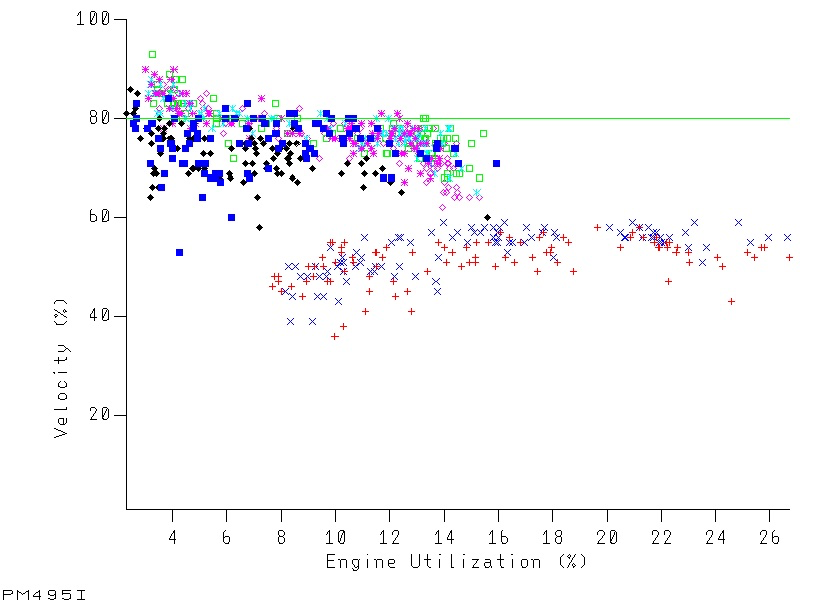

While evacuating a whole machine might lead to more loss of capacity than a single drawer, both scenarios need planning for. There needs to be enough remaining capacity to run the important workloads. I’m finding the conversation about shedding workload in an emergency is very similar to the conversation about making work displaceable. Many customers find it very difficult to find work to shed.

Of course, with the exception of older machines, it might be feasible to temporarily add capacity on surviving machines.

You would want to schedule such activities when there is little work on the affected machine – but such a time is becoming increasingly difficult to find. Particularly if you make the pessimistic assumption that it will take longer than scheduled.

Conclusion

Whether evacuating the whole machine or a single processor drawer planning for it is important. I’d advocate doing the planning at a time when the question is theoretical; You don’t want to do it in an emergency.

I would also advocate keeping an eye on where LPARs’ logical processor home addresses are in the machine. This is much easier with z16 and later machines.

One final thing: A long time ago, when Concurrent Drawer Repair was new, I presented it to a customer. Their response was “That’s very interesting, Mr Packer, but if it’s all the same to you, we’d like to continue with the practice of handing over the whole machine to you”. I wonder how many customers feel the same today.

Making Of

I wrote this post on two flights between London and Barcelona, my first time to that city. And with a new-to-me customer. My aim – for once, it seems – is to publish almost immediately.