(Originally posted 2015-11-14.)

Or can they?

Actually I can’t answer that question. I’m aware my blog gets distributed in Development in Poughkeepsie (at very least) so maybe one of them can give a far better answer than I can.

Though this post isn’t meant to address this in its entirety I have a point of view:

A long time ago I learnt there were processor-related control blocks in 24-Bit Virtual. Though in the MVS/XA era you wouldn’t have expected a few (1 – 4) engines’ control blocks to be a major threat, in terms of virtual storage. [1] I’m pretty certain control blocks for engines must’ve evolved in the past 30 years. Now z/OS supports so many more processors, I find it hard to believe things haven’t had to change. Scaling **isn’t ** just about increasing the value of some “max_engines” quantity. It’s also about making the experience worthwhile. So, for example, multiprocessor ratios (essentially, what happens when you add another engine) have to be convincing.

There’s plenty of evidence of Development making the mainframe and z/OS (and DB2 and CICS and …) scale.

So I’m pretty confident offline engines are largely harmless.

But, Soft! Methinks I Do Digress Too Much

This post wasn’t meant to be about any of the above. It’s actually about what happens when a physical machine has a very large number of logical processors.

Specifically, what happens to SMF 70 Subtype 1 records.

There are two scenarios that concern me (or at least challenge our code):

- A very large number of LPARs on a machine, reported on by an RMF instance.

- LPARs with a large number of logical processors defined.

Or, frankly, some combination of both.

I’ve seen 3 sets of data this year that have challenged our code because of either or both of the above.

So let me explain…

… The “headline” issue is multiple [2] SMF 70–1 records per RMF per interval.

Let Me Explain In More Detail

Most of the sections in the 70–1 record are either singletons or small. But two are worth looking at more closely…

- Logical Partition (Data) Sections – One per LPAR, whether active or not.

- Logical Processor (Data) Sections – One per logical engine, defined to an LPAR, whether Online or not.

An LPAR’s Logical Partition section points to the related Logical processor sections – with a first section number and a count.

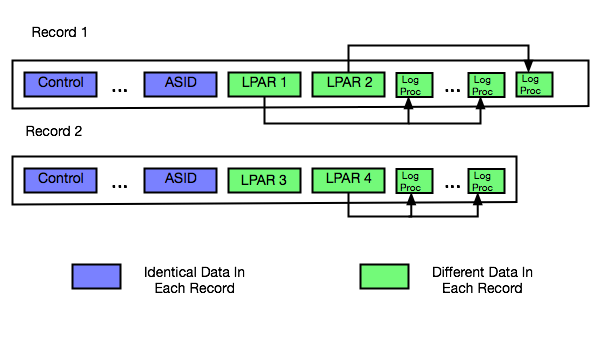

The following diagram illustrates this.

Here we have 2 records from the same interval and the same RMF instance:

- The first record has 2 Logical Partition sections, each pointing to a number of Logical Processor sections.

- The second record has the remaining 2 Logical Partitions section for the machine. The first one (LPAR3) is deactivated, having no Logical Processor sections. The second one (LPAR4) has Logical Processor sections (and is therefore active).

Around 300 – 350 Logical Processor sections are enough to fill up a 32KB SMF record. And that’s when you get a second one.[3]

This Requires Care

Here are some things to note:

- When processing these sections it’s very useful that all the Logical Processor sections for an LPAR are in the same record as the corresponding Logical Partition section.

- Every 70–1 has counts of the machine’s characterised processors (the ones you bought).

- Every 70–1 has other sections, related to this LPAR’s definition and CPU Utilisation and Address Space queues. These are all present in each record for the RMF / interval combination.

- Every 70–1 has the pool names, in a set of 6 CPU Identification sections.

Because the CPU utilisation and address space queue information is in all the 70–1 records we were double-counting important things [4] – when we had 2 70–1’s per interval. I fixed this by only using the first record’s copy of these sections.

How Does This Come To Be?

As I said above, the 70–1 contains Logical Processor sections even for offline processors. If you have lot of LPARs, each with say 32 logical processors defined and with 25 offline, you get lots of 32-section groups.

It’s not hard to get to more than 300 Logical Processor sections for a machine, then.

And scenarios where LPARs have lots of defined processors is very common:

- IRD’s Logical Processor Management function varied engines on and off line.

- Bringing online an offline processor is much easier than having to define additional ones.

- Hiperdispatch parks and unparks Vertical Low processors. They’re still online when parked.

There are probably other scenarios I’m not intimately familiar with.

An Aside On Duplicate 70–1 Data

A discussion I had this week with a colleague highlighted that not many people know the following:

Suppose you have a machine with 2 LPARs (SYSA and SYSB), each running RMF.

Suppose you broke up their 70–1 records and stored the Logical Partition and Logical Processor sections as rows in 2 performance database tables.

You will get two sets of rows in each table, seemingly near identical. This is because when RMF in SYSA and RMF in SYSB cut 70–1 records they retrieve the same data independently from PR/SM.

In our code, when laying out the LPARs, we pick one z/OS RMF system for each machine and only report on the LPARs from its 70–1s. It might be stating the obvious but you should do the same.

We also report on each processor pool separately, noting that z/OS LPARs are often in two pools – GCP Pool and zIIP Pool.

In Conclusion

Almost everything I’ve talked about in this post relates to logical processors. Once you add in physical processors the 70–1 record gets to be even more complex.

It’s highly valuable data so process it carefully.

And most customers don’t have to worry about multiple 70–1 records per interval per RMF. You can easily use ERBSCAN and ERBSHOW against an SMF data set in ISPF 3.4 to see if you do.

But generally, offline processors are harmless. Indeed operationally useful.

-

Correct me if I’m wrong, please. I suspect someone has a war story or two. ↩

-

Actually, in each case it’s been only two – but the problem generalises to more than two (as does our code solution). ↩

-

And potentially a third, etc. ↩

-

For example, the Capture Ratio was just short of 50% – because the 72–3 records weren’t double counted. ↩

2 thoughts on “Offline Processors Can’t Hurt You”