It was recently brought to my attention that CFLEVEL 25, made available with IBM z16, improved ICA-SR links.

(I don’t know why I didn’t spot this before – but it’s documented in several places, including IBM Db2 13 for z/OS Performance Topics, an interesting Redbook. (I actually read this from cover to cover during a recent power outage.)

An ICA-SR link is short distance, and faster than CE-LR (long reach) links. The ICA-SR fanout connects directly to the processor drawer. There are two flavours:

- ICA-SR (Feature Code 0172)

- ICA-SR 1.1 (Feature Code 0176)

You can carry both of these forward into a z16. This post, though, is exclusively about ICA-SR 1.1.

Note: ICA-SR links can be up to 150m. Any longer and you’d be using CE-LR links.

What’s Changed

The ICA-SR 1.1 hardware didn’t change between IBM z15 and z16. What changed is the protocol.

To quote from IBM z16 (3931) Technical Guide

On IBM z16, the enhanced ICA-SR coupling link protocol provides up to 10% improvement for read requests and lock requests, and up to 25% for write requests and duplexed write requests, compared to CF service times on IBM z15 systems. The improved CF service times for CF requests can translate into better Parallel Sysplex coupling efficiency; therefore, the software costs can be reduced for the attached z/OS images in the Parallel Sysplex.

The changes that lead to these improvements are:

- Removing the memory round trip to retrieve message command blocks.

- Removing the cross-fiber handshake to send data for a CF write command.

It almost doesn’t matter what the changes were – except Item 2 probably explains the relatively large improvement for write requests (whether duplexed or not).

Impact Of The Improvement

So, how do we interpret the effect of these improvements? Usually we divide structure service time decreases into two areas of benefit:

- Workload response time decreases and throughput improvements.

- Coupled CPU reductions for synchronous requests.

(Conversely, an increase in service times leads to the opposite effects. This would typically be a matter of increasing distance.)

On the first point, most applications aren’t overly sensitive to coupling facility request times. Often they’re more sensitive to other aspects, such as obtaining locks or buffer pool invalidations. But one shouldn’t dismiss this out of hand.

On the second point, recall that a coupled (z/OS) processor spins waiting for a synchronous request. So, the faster a synchronous request is serviced the lower the z/OS CPU cost.

It’s worth noting that individual processors are faster on a z16 compared to a z15. So it might be that the z16 ICA-SR 1.1 improvements more or less match the coupled engine speed improvement. You might consider this “running to stand still” but both improvements are net gains for most customers. Further, it makes ICA-SR 1.1 more attractive on a z16 than ICA-SR.

A reduction in request service times over physical links might make using external coupling facilities more feasible. This could open up more architectural choices – such as using external coupling facilities where today you use internal.

Note: A reduction in service times can lead to some formerly asynchronous requests becoming synchronous. This is not a request-level conversion process but rather a consequence of the dynamic conversion heuristic; Now more requests are serviced quicker than the heuristic’s thresholds. If this happens the coupled (z/OS) CPU might well go up. Of course former async requests would probably have even lower service times – because they’d become sync.

Conclusion

It seems appropriate to encourage anyone moving to z16 to ensure their ICA-SR links are 1.1 – whether they brought them forward or perhaps replaced older ICA-SR links. Of course, there might be a cost downside to balance against the upsides.

It also seems to me to make ICA-SR on z16 more attractive, relative to IC links on previous generations. That might increase configuration options, including adding more resilient design possibilities.

Two other RMF-related things to note:

- RMF doesn’t distinguish between ICA-SR generations; They all have Channel Path Acronym “CS5”. (CE-LR is “CL5” and IC Peer is “ICP” – for completeness.)

- RMF doesn’t have a fine-grained view of Coupling Facility request types. (It does know about castouts but that’s about all.)

Neither of these is RMF’s fault; It’s able to report only based on the interfaces it’s using.

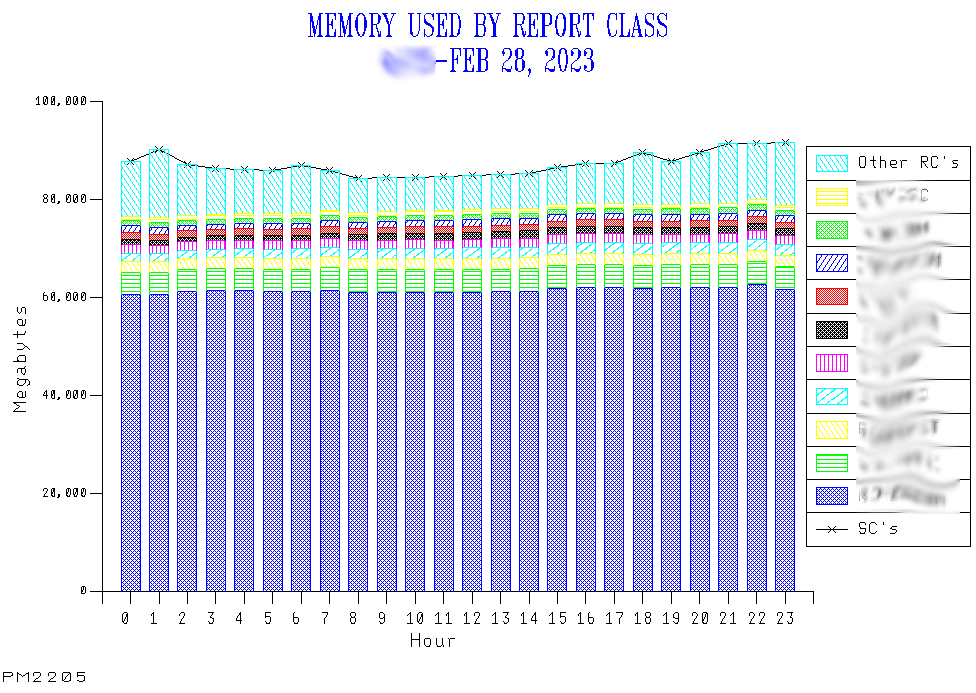

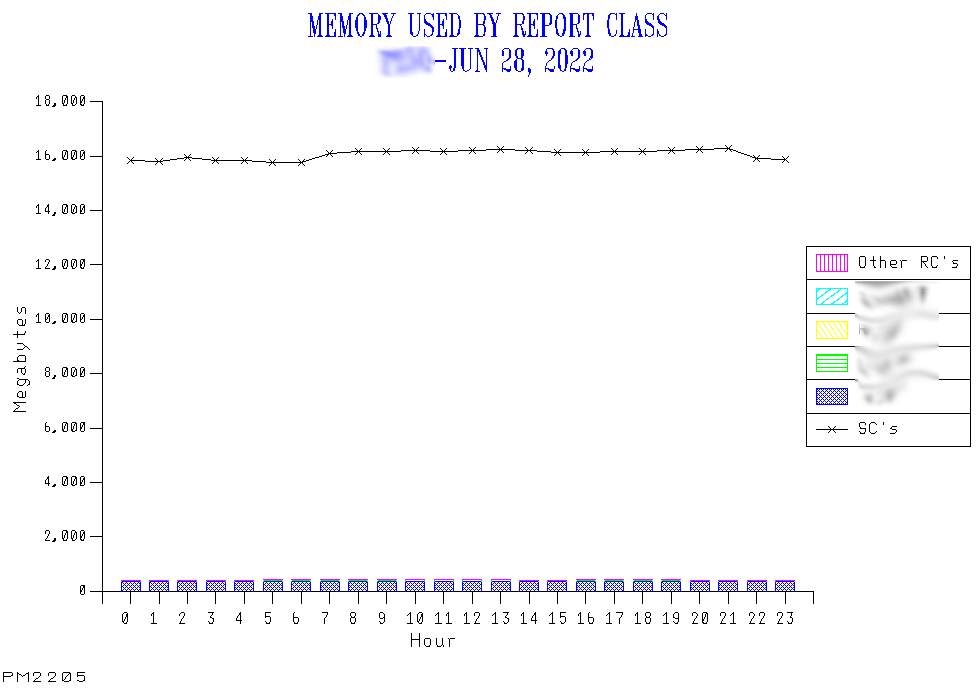

One final thought: As articulated in A Very Interesting Graph – 4 months ago – there’s much more to request performance than just ICA-SR niceties. But the improvement in z16 ICA-SR 1.1 is surely welcome.