(Originally posted 2019-03-22.)

I’m writing this on a plane, heading to Copenhagen. Planes, like weekends, give me time to think. Or something. 🙂

Ardent followers of this blog will probably wonder why there have been few “original content” posts to this blog1 recently.

Well, I’ve been working on an exciting project with my friend and colleague Anna Shugol. Now is the time to begin to reveal what we’ve been working on. We call this project “Engine-ering”2.

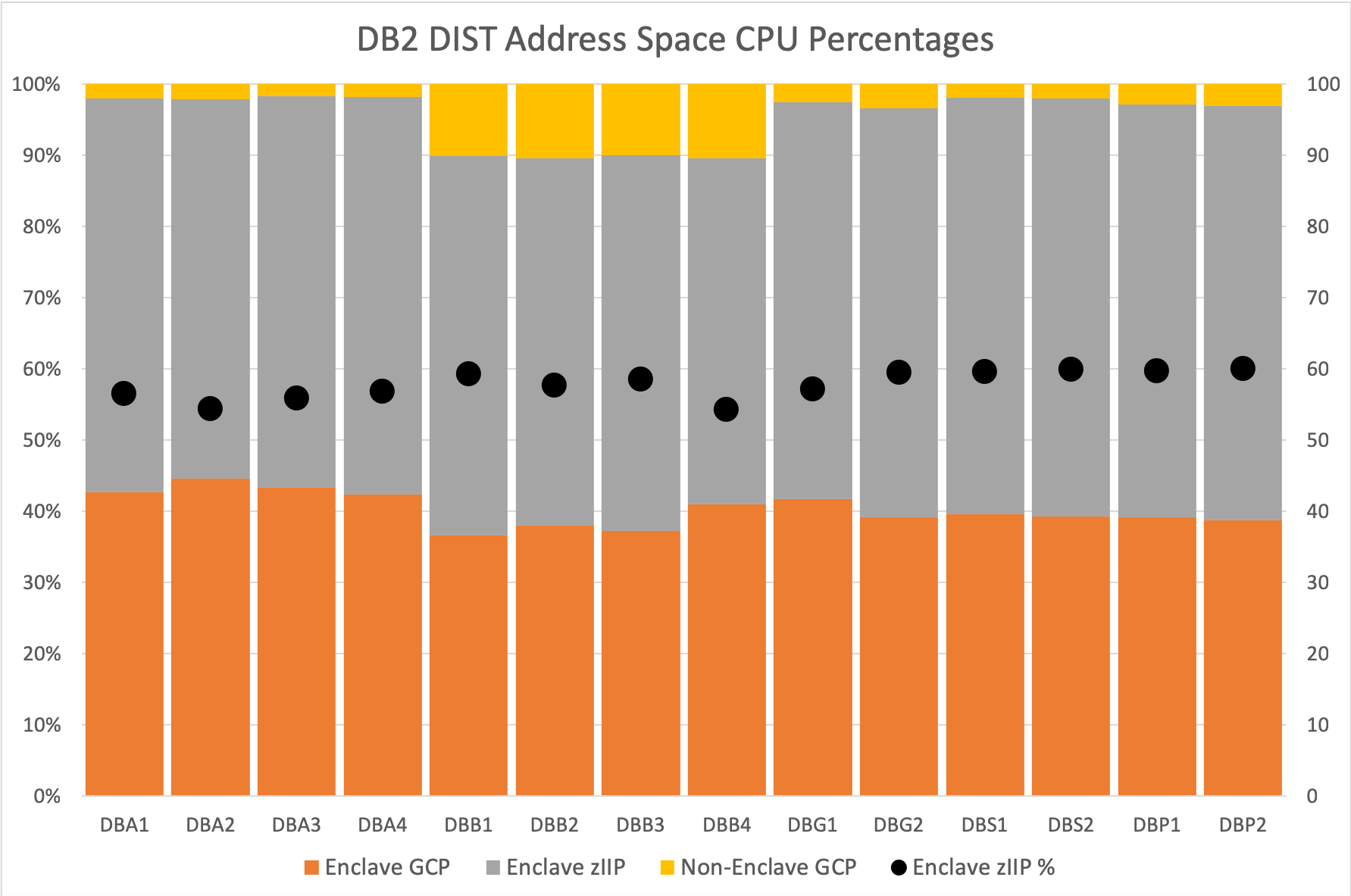

The idea is simple: There is real merit in examining CPU at the individual processor level, for example the individual zIIP. As one colloquial term for processor is “engine” it’s easy to end up with a title such as “Engine-ering” and the hashtag #EngineeringWorks is way too tempting not to deploy.

The project has three parts:

- Writing some analysis code.

- Deploying the code into real customer situations.

- Writing a presentation.

These three are intertwined, of course. As we go on we will:

- Write more code.

- Gain more experience with it in customer situations.

- Evolve our presentation.

You’d expect nothing less from us.

Traditional CPU Analysis

Traditionally, CPU has been looked at from a number of perspectives:

- Machine and LPAR – with SMF 70-1.

- Workload and service class – with SMF 72-3.

- Address space – with SMF 30-2/3, also 4/5.

- DB2 transaction – with SMF 101 – and its analogues for other middleware.

- Coupling Facility – with SMF 74-4.

All of these have tremendous merit – and I’ve worked with them extensively over the years.

z/OS Engine Level

Our idea is that there is merit in diving below the LPAR level, even below the processor pool level. So we would want to, for example, examine the zIIP picture for an LPAR. But we wouldn’t want to just look at in in aggregate. We want to see individual processors. There are at least a couple of reasons:

- Skew between engines could be important.

- Behaviours, such as HiperDispatch parking, get thrown into sharp relief.

RMF

RMF (SMF 70-1) reports individual engines at two levels:

- This z/OS image.

- All the LPARs on this machine.

The trick is marrying these two perspectives together. Fortunately, a few years ago, I realised I could use the partition number of the reporting system and match it to the partition number of one of the LPARs. That does the trick.

In the past week I wrote some code to pump out engine level statistics for the reporting LPAR:

- Vertical weights

- Engine-level CPU utilization

- Parked (or unmarked) time

The first two are from the PR/SM view. The third is from the z/OS view. Which makes sense.

In any case I have some pretty graphs. And I got to swear at Excel a lot.3

SMF 113 Hardware Counters

This one is more Anna’s province than mine. But, processing SMF 113-1 records at the individual engine level, we now can see Individual engine behaviours in the following areas:

- We can see instructions executed, cycles used to execute them, and hence compute Cycles Per Instruction (CPI).

At the individual engine level there is some very interesting structure, especially between Vertical Low processors (with zero vertical weight) and Vertical Highs (VHs) and Mediums (VMs).

Actually there is a lot of difference sometimes between individual VH and VM engines.

- We can see the impact of Level 1 Cache misses – in terms of penalty cycles per instruction – for Data Cache and Instruction Cache individually. This begins to explain the CPI behaviors we see.

Pro Tip: Understanding the cache hierarchy in a processor really helps, and it’s different from generation to generation.

Those of you who know SMF 113 know there are many more counters. We intend to extend our code to look at those soon.

SMF 99-12 And -14

Another area we intend to extend our code to analyse is SMF 99 subtypes 12 and 14. This data will tell us how logical engines relate to physical engines, right down to which drawer they’re in, which cluster (or node for z13), even which chip. All of this can help with understanding the “why” of what SMF 113 is telling us.

Coupling Facility

You can play a similar RMF-level game for coupling facilities. Normally, you wouldn’t expect much skew between CF engines. But in Getting Nosy With Coupling Facility Engines I showed this wasn’t always the case.

I would say that, while the “don’t run your coupling facility CPU more than 50% busy” rule is sensible you might want to adjust it for any skew your coupling facilities are exhibiting.

Outro

We presented this material the other day to the zCMPA working group of GSE UK. This was to a small number of sophisticated customers, most of whom I’ve known for many years. It’s become a bit of a tradition to present an “alpha” version of the presentation.4

This post roughly follows the structure of the presentation. In this presentation we have some very pretty graphs. 🙂

Anna coined the term “research project”. I like it a lot.5 In any case, the code is a permanent part of our kitbag. If you send me data, expect me to ask for this new stuff and to use it in conversations with you. I think you’ll enjoy it.

We think the presentation went very well, with some nice discussion from the participants. Partly because of that, but not really, we intend to keep capturing hills with the code, gaining experience with customers, and evolving the presentation. Every so often I’ll highlight bits of it here. Stay tuned!

-

I don’t count podcast show notes as “original content”, by the way. But rather a personal note on each episode. ↩

-

You wouldn’t believe what various forms of autocorrect do to the string “Engine-ering”. Three examples are “Engine-Ewing” and, hilariously, “Engine-erring” and “Engine-earring”. 🙂 ↩

-

The only way, in my experience, not to swear at Excel a lot is to automate the things you find fiddly about it. I’ve done some of that, too. ↩

-

Last year Anna and I presented an alpha version of “Two LPARs Good, Four LPARs Better?” To the same group. It was much better to actually have her in the room with us this time. 🙂 ↩

-

Much better than my “you’re all being experimented on”. 🙂 ↩