(Originally posted 2018-01-26.)

“What is this DB2 subsystem for?” is an important question – at least to me. And explaining this topic over lunch to a friend of mine who was a DB2 developer, convinced me it has more general importance.

If you’re a DB2 sysprog or DB2 the answer is probably well known to you 1.

If you’re not a DB2 person it’s probably not so obvious.

Something worth noting at this stage is that very little of what’s in this post is specific to DB2. Much of it applies to IMS and MQ, for example.

Why Do We Care What A DB2 Is For?

In general, the more you know about what is going on on your systems the better.

Certainly, from my perspective as an outsider, I think I’m more effective with a customer if I know what their infrastructural components are for. It’s a kind of architectural thinking – so it might appeal to architects and infrastructure managers in customers as well.

To take a (generalised) example, if I see a DB2 Datasharing group I want to know what each member (DB2 subsystem) is for. Elements of the answer might include statements like:

- This DB2 is for Batch.

- These DB2s are clones of each other, for resilience.

We’ll return to these presently.

One other point: Subsystems arise over time for different reasons. Two examples:

- To provide virtual storage constraint relief.

- Different applications came into being, each with their own DB2. They might even have come from different companies merging. I’ve heard customers refer to these as “heritages”.

Add to that how Datasharing groups come to exist and the picture gets really interesting.

And the reason for all this is to be able to provide governance and manage DB2 subsystems better.

Detecting The Different Roles

This post really emphasises the use of SMF 30 (Address Space) and RMF data. The reason for using this data (and not DB2-specific data) is quite simple: The approach has to scale. Many customers have more than 50 DB2 subsystems, some more than 100. If you do, you’re not going to be keen on processing Accounting Trace for all of them.2

Of course, having formed a high level view this way, you might well want to dive into Accounting Trace – but only for some subsystems and time ranges.

Detecting DB2 Subsystems

This is the easy bit: From SMF 30, if the job name is fredDBM1 and the program name is DSNYASCP then we have subsystem fred. We can see its DB2 version in the Usage Data Section.

Obviously you see its SMFID, Workload, Service Class, Report Class and Reader Start Time.

Detecting Datasharing Groups

You can, most of the time, obtain a good grouping of subsystems into Datasharing groups. The following technique works if each DB2 subsystem has its own IRLM and if the IRLM address space’s job name matches the subsystem. For example the IRLM address space corresponding to DB2ADBM1 (subsystem DB2A) is DB2AIRLM.

A DB2 Datasharing group has two XCF groups, supporting the group’s LOCK1 Coupling Facility structure. One’s name begins with IXCLO. It’s the other one that interests me: It is a proxy for the group name. For example, it might be called DXRPROD when the actual group’s name is DSNPROD or DB2PROD. But it’s close enough. (CF structure names could be used to patch the name, perhaps.)

In any case the IRLM address spaces – as proxies for subsystems – can be used this way to detect Datasharing groups.

I discussed this in detail in DB2 Data Sharing and XCF Job Name back in 2009.

Working Out What Role A Subsystem Plays

This is the bit that really excites me: My bold claim is I can tell you something about the work coming into a DB2 subsystem without using DB2 instrumentation3. And the use of SMF 30 only is important because most people connect SMF 30 across their entire z/OS estate.

The key to this is the Usage Data Section in SMF 30, which I’ve talked about many times.

As an example, take a CICS region. (You detect these by the program name being DFHSIP). If the CICS region connects to a DB2 subsystem you get at least one Usage Data Section that documents the DB2 connection. In particular it tells you the DB2 subsystem it connects to. I’ve discussed this in a 2012 post: Another Usage Of Usage Information.

By the way a CICS region can directly attach to only one DB2 subsystem (likewise only one MQ queue manager).

So you can identify all the CICS regions connecting to a given DB2. You could, in addition to naming them, add up their CPU.

Similarly, for IMS work you’d see a Usage Data Section mentioning IMS.4

And almost everything else you could lamely call “Other” or be bold and call it “Batch”. My code counts the distinct job names and sums up the CPU.

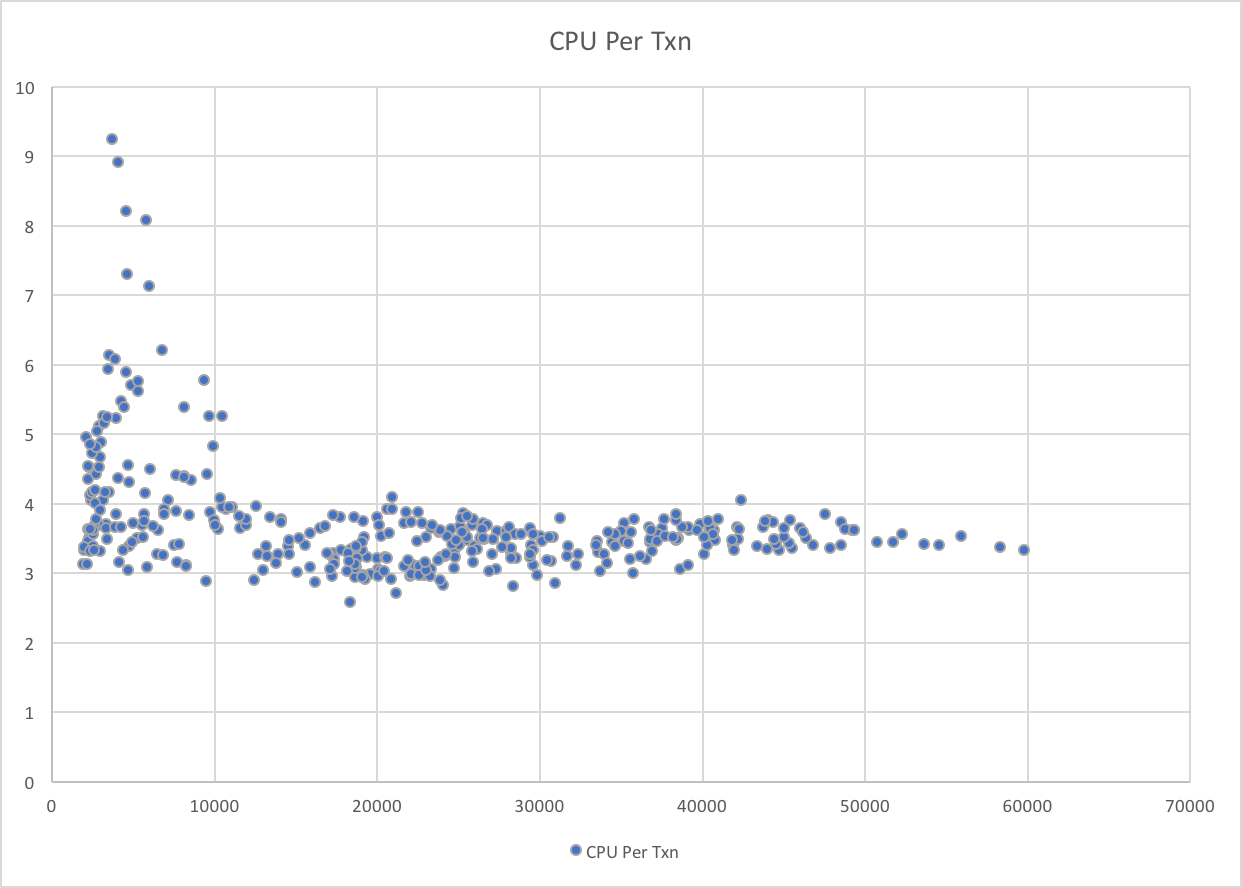

DDF is an interesting case: You would find the DIST address space for the subsystem5 and examine the Enclave-related fields. I wrote about this in DB2 DDF Transaction Rates Without Tears But essentially you can pick up the DDF Transaction Rate and DDF CPU.

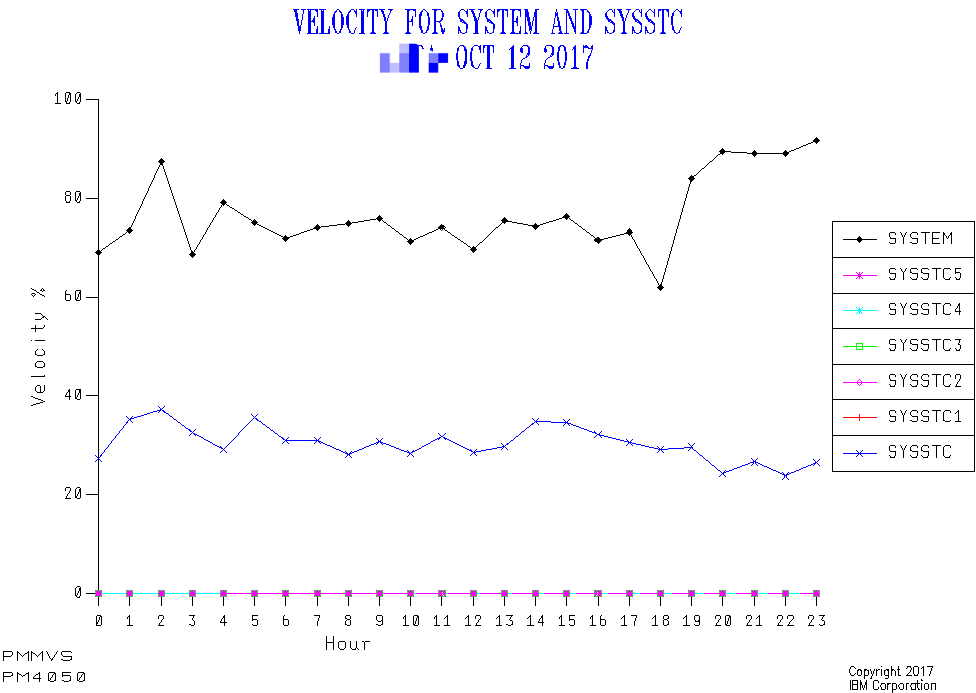

So, let’s take a real example. Here is a DB2 Datasharing group with 6 members, running on 2 LPARs.

The numbers are from my prototype code. All numbers are multi-hour (shift) averages.

Looking at SYSA first:

- DB1A has a large amount of CICS work (over 7 engines’ worth) from 14 regions. It also has a very small amount of DDF work. “Other” here is mainly Batch and it is about an engine’s worth.

- DB2A also has lots of CICS work – over 7 engines’ worth from 16 regions. DDF work is much more significant than on DB1A – at about half an engine. “Other” is again similar.

- DB3A has very little work to it – just a small amount of DDF.

Turning to SYSB:

- DB1B has 11 CICS regions, using 5 engines’ worth of CPU and a small amount of DDF. Again “Other” is just over an engine’s worth.

- DB2B is about as lightly used as DB3A – with a smattering of DDF and a littl bit of “Other”.

- DB3B has 17 CICS regions, using 6.4 engines’ worth of CPU. DDF here is a significant workload, similar to that on DB2A. Here is uses about 1/3 of an engine. “Other” is about 1 engine’s worth of CPU.

So, these are “broad brush” characterisations but I think they are useful. For example, the 2 DDF-oriented subsystems (DB3A and DB2B) might be easy to fold into other subsystems. I say this because they are light on transaction rates and CPU so should pose no threat to the scalability of other subsystems in the Datasharing group.

Conclusion

Much of this instrumentation you might’ve seen before. The trick here is to invert the question “what does this address space connect to?” to yield “what connects to my DB2?” And that way you get a view of the role of the DB2 subsystem.

Of course, this is not nearly as deep an analysis as you could do with DB2 Accounting Trace – such as what transactions get at a DB2. But it is much lighter and more scalable. It’s great for a first pass – which might be all I get to do.

One other thought: This feels like the beginning of a journey to characterise DB2 subsystems. One could, for example, use WLM Service Classes6 for classifying each DB2’s role. This wouldn’t work for DDF but for other work you could sum up the 30’s by Service Class instead of e.g. “CICS”.

-

Though, with “self service” models, DB2 subsystems can pop up without much governance. ↩

-

Even with rather fewer DB2 subsystems, you’d rather not process Accounting Trace if you don’t need to. ↩

-

Pardon the gratuitous visual pun. 🙂 ↩

-

You can’t use program name here to find IMS work as not all IMS work has a mnemonic “IMS” program name. ↩

-

Perhaps this is stating the obvious but if the subsystem is DB2A the DIST address space is DB2ADIST. ↩

-

Or indeed Report Classes. ↩